How I built a CDN for our multi-tenant app within a day

Ok - before I begin let me give you some context. The multi-tenant app (or SaaS product) in question is Hashnode.dev. It's a new blogging platform that lets developers run a personal blog for free without worrying about ads or paywalls. We launched this in April as closed beta and are testing things out with around 100 developers.

To understand the CDN thingy we are talking about, you need to know how custom domains work on hashnode.dev. So, here we go:

- When you sign up on Hashnode and unlock your blog, you get a free

.hashnode.devsubdomain. e.g. https://sandeep.hashnode.dev - If you would like to use your own domain, you can just point it to our IP. We'll also generate a free LetsEncrypt certificate for your domain and renew it automatically.

After we built the whole thing, we realized that the TTFB values for these blogs were more than 100ms. So, we asked ourselves "how do we speed up the content delivery for these custom domains while serving them over HTTPS?".

The APIs and DB calls were already optimized. The only thing that was missing was a CDN. We also wanted the CDN to support custom SSL certs for individual domains. Like any other engineer, I tried out a bunch of solutions and tried to use an existing CDN instead of rolling out our own. The ideal CDN must support the following criteria:

- Ability to cache HTML, JSON and varieties of other formats.

- Ability to generate SSL for our customers on demand.

- Must be affordable and easy to integrate with our product.

- It's 2019 and we have LetsEncrypt today. So, no extra charge for generating SSL.

Here are a few solutions I tried out:

Cloudflare SSL for SaaS

Cloudflare offers something called SSL for SaaS providers which handles exactly this. They will generate an SSL cert for your customers' domains. So, your customers get free SSL + caching by Cloudflare. What's not to like? Well right after I discovered this, I also quickly realized that this feature is available only in Enterprise plan which costs around $5K USD per month. It's obviously beyond reach of a small startup like us. So, it was a no go.

AWS Cloudfront

We can always create a Cloudfront distribution for each customer and ask them to add a CNAME record that points to the distribution URL. Amazon Certificate Manager (ACM) certs are free as long as you are using them with Cloudfront. So, no extra charge for SSL. However, it had two drawbacks:

- There is a limit to the number of Cloudfront distributions you can create per account. You have to request Amazon to lift the limit periodically.

- CDN cost is going to sky rocket once you scale.

- The process was complex. Our users had to validate ownership of their domain first which took several hours in some cases.

However, we went with this approach for first few users, but kept looking for better solutions.

Fly

Honestly, fly.io looks really promising. I wanted to give it a try, but the price of SSL ($1/month per 10 certs) stopped me. At some point if we have 10,000 users, SSL will cost us $1000 per month. If, for any reason, I couldn't roll out my own CDN I would have gone ahead with them. ✌

I further explored KeyCDN, StackPath and almost every other mainstream CDN solution out there. But none of them supported edge caching with free SSL. At this point, I decided that I would try building a simple CDN for our use case.

So, I grouped the tasks into the following 3 steps:

DNS Resolution

One of the core responsibilities of a CDN is to serve content to end users from a location that's geographically closest to them. So, I needed a DNS provider that resolves a hostname into IP addresses based on geolocation. The first service that came to my mind was AWS Route 53. It's cheap, fast and easy to use. You pay $0.50 per hosted zone and $0.70 per million queries to first 1 billion queries / month.

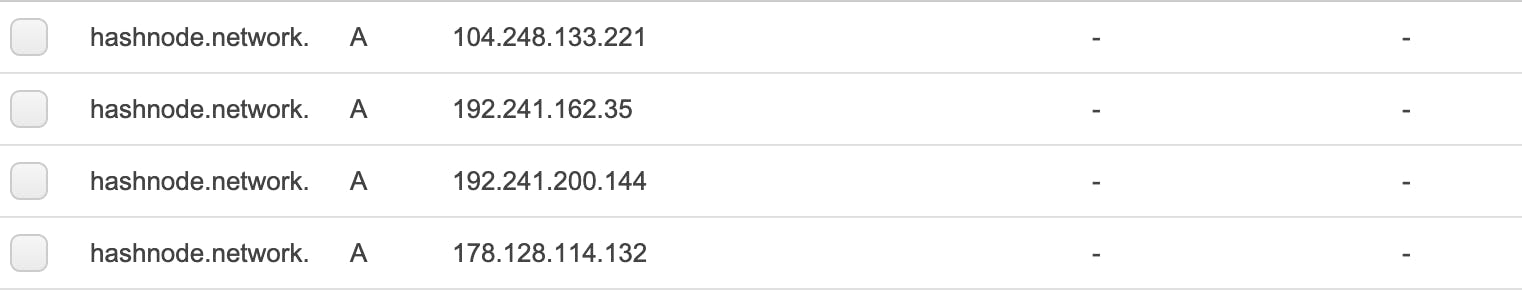

So, I went ahead and added our domain hashnode.network to Route 53. Then I created multiple A records based on geolocation. The initial few records looked like this:

I started with 4 servers in the following locations:

- Frankfurt

- Singapore

- New York

- San Francisco

Now, we could just ask our users to add a CNAME record to their DNS whose value is hashnode.network and Route 53 will automatically resolve it to an IP address that's geographically closest.

SSL at Edge

If you've read my previous article, you might know that we've been terminating SSL at our origin server which is in SF. Now if we want to serve content from an edge location we must terminate SSL at the specific edge node. So, what do we do?

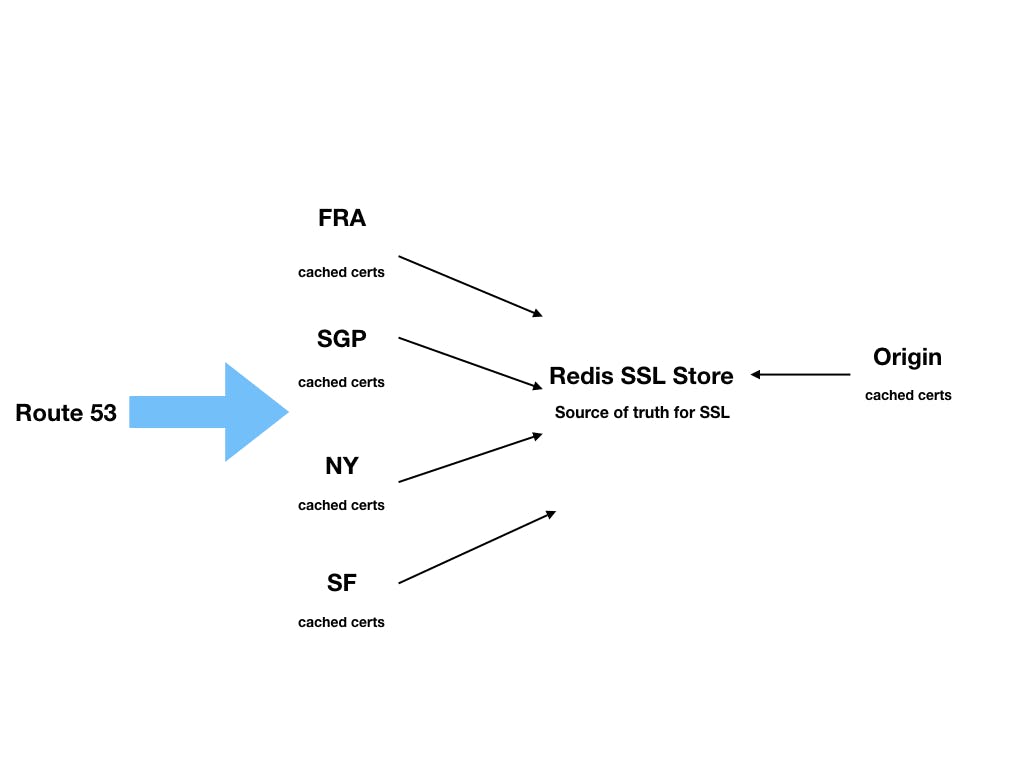

To give you some context, our origin server uses OpenResty with lua-resty-auto-ssl to generate certs on demand. However, these certificates are stored on disk and cached in memory. So, the edge nodes didn't have access to these certificates. Fortunately, lua-resty-auto-ssl supports redis as a storage adapter. So, I spun up a redis server in SF and switched the cert storage from disk to redis. Now, certificates would be stored in redis and cached in server memory.

Next step was to install OpenResty in each edge node and configure them to use the same redis instance to store the certificates. So, if one edge node issues a cert, it's automatically accessible to all the nodes. When a request comes to an edge node, it always checks if it has the cert in memory -- if not it fetches it from redis and caches it locally. This way each edge node and origin have access to all the SSL certs and we can safely terminate SSL at edge level. Here's how the architecture looks like:

This way we are able to generate unlimited SSL certs without any extra cost.

Cache Layer

This is the easy part. I wrote a simple Node.js app which sits behind OpenResty reverse proxy and caches HTML responses on file system. Every blog on Hashnode has two cacheable parts: home page and blog posts. Whenever a request comes in, the app checks if the edge location has a cached version. If yes, it simply serves it. Otherwise, it makes an HTTP call to origin (over SSL), serves HTML to the client and caches it on disk for a month.

We invalidate the cache whenever the author publishes a new post or if any of the posts is updated or if the user reconfigures their blog via the dashboard. Purging is handled via a message queue, but the details are out of the scope of this article.

At this point, I had handled all three parts. DNS resolution + SSL at edge + Cache Layer = ⚡️blazing fast CDN

To summarize, here is what we achieved by rolling out our own CDN:

Firstly, I saved $$$. I didn't code things from scratch. DNS resolution is handled by rock solid AWS Route 53 and SSL generation is handled by well tested

lua-resty-auto-sslpackage. The only thing I coded was Cache Layer which was pretty straightforward.Superior Cache Hit ratio. If you use a third party CDN like Cloudflare, the cached items will be evicted if they are not frequently accessed. With our own CDN, we can cache items for as long as we wish -- thus resulting in superior HIT ratio.

We're adding more edge nodes with no extra work. It's just a matter of creating the node and adding its IP to Route 53.

We don't pay for SSL management.

We can always tweak the CDN and heavily customize it as per our needs which is difficult with a third party CDN.

All the Hashnode powered blogs have a TTFB value < 100ms in most cases and have a Google PageSpeed score of 99 - 100.

I went with DIY route because no other existing CDN solution caters to the needs of SaaS businesses. The only viable solution is Cloudflare (Enterprise plan) which is too pricey for a small startup. However, we still use Cloudflare for Hashnode community and are very happy with it. You can read more about our experience here. I recommend this DIY approach only if you need to offer CDN to your customers and want to enforce SSL (which is a must these days). If that's not the case, you should just use Cloudflare.